Mapping & Navigation

Simultaneous Localization and Mapping

One of the key features that differentiates the Misty Advanced edition robot from the Misty Standard edition is autonomy. Misty Advanced edition is intended for autonomous use cases that require more complex navigation tasks and self-charging. To this end, Advanced robots contain an additional sensor and software package that enables SLAM, and also a wireless charging dock. When used together, these components allow Misty to map and navigate her environment. Creating an using a map with Misty involves several steps, and some terminology that you should become familiar with.

Terminology

- Pose - the robot's pose represents the robot's location and orientation in three-dimensional space. If the robot "has pose", it is receiving consistent high quality updates from the SLAM system. If the robot has "lost pose", it no longer understands it's location.

- Exploring - this is the process by which Misty discovers her surroundings and creates a map.

- Localization - this is the process of determining where the robot is within a map.

- Relocalization - generally experienced after a loss of pose, this is the process by which the robot re-determines it's location within a given map.

- Tracking - this is the process of using an existing map, in conjuction with pose, to understand the robot's current location.

- Occupancy grid - this is a two-dimensional, top-down map, represented as a two-dimensional array of bytes, each byte indicating the status of that cell.

Occupancy grid cell states may be:

- Unknown (0) - the robot has no knowledge of this cell

- Open (1) - the robot can traverse this cell

- Occupied (2) - this cell cannot be traversed due to obstacle

- Covered (3) - this cell could be driven under (like a table, perhaps)

Getting Started

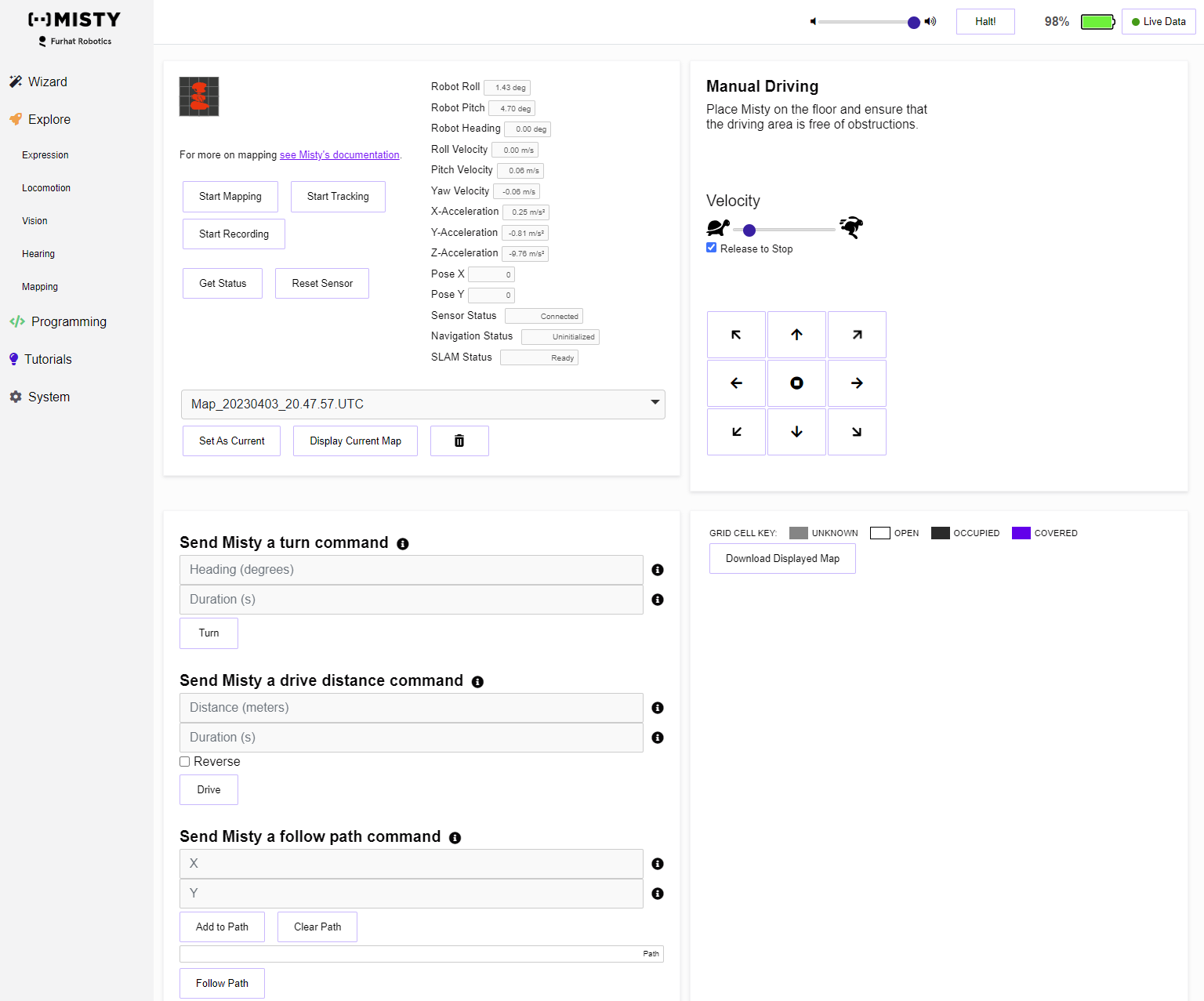

To get started, navigate to Misty Studio, then to the Explore menu, then Mapping. The page presented should look something like this.

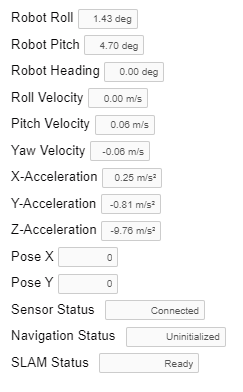

There are some key elements on this screen that you should be aware of when using the mapping functions. First, take note of the data panel.

This section contains relevant orientation details from the robot's IMU, and from the Occipital Structure Core sensor that Misty uses for mapping. Note that data values here are somewhat noisy, and that single readings observed in isolation may seem unexpected. Some filtering is preapplied befroe the data is passed back to Misty Studio, but it won't smooth every outlier. In addition, the status items (Sensor Status, Navigation Status, and SLAM Status) all describe what exactly is happening with the Structure Core sensor.

Note: Misty's heading, as presented here, comes from the IMU in the chassis. This IMU only understands relative orientation from it's first reading. You'll notice this most with bearing, which represents the robot's rotation. When the IMU first starts, the bearing is set to zero, and all subsequent readings are based from that.

Sensor Status includes the following states, though most will not be observed during normal operation:

- Uninitialized

- Connected

- Booting

- Ready

- Disconnected

- Error

- UsbError

- LowPowerMode

- RecoveryMode

- ProdDataCorrupt

- CalibrationMissingOrInvalid

- FWVersionMismatch

- FWUpdate

- FWUpdateComplete

- FWUpdateFailed

- FWCorrupt

- EndOfFile

- USBDriverNotInstalled

- Streaming

Navigation Status includes the following states:

- Uninitialized

- Tracking

- Exploring

- Relocalizing

- Paused

- ExportingScene

- NotAvailable

SLAM Status includes the following states, which exist in combination:

- Uninitialized

- Initializing

- Ready

- Exploring

- Tracking

- Recording

- Resetting

- Rebooting

- HasPose

- LostPose

- ExportingMap

- Error

- ErrorSensorNotConnected

- ErrorSensorNoPermission

- ErrorSensorCantOpen

- ErrorPowerDownRobot

- Streaming

- DockingStationDetectorEnabled

- DockingStationDetectorProcessing

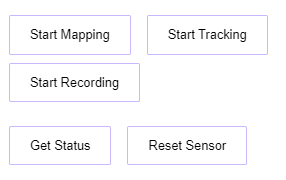

Within Misty Studio, access to the SLAM controls is very direct. These controls aren't designed to represent the ideal way to generate a map, but really provide push button access to the core functionality. The controls are a simple series of buttons.

Button functionality is:

- Start Mapping - Puts the system into an "Exploring" state, which begins the process of creating a map. After mapping has started, the functionality will change to "Stop Mapping"

- Start Tracking - Uses the current map for tracking, localizing within it. After tracking has started, this functionality will change to "Stop tracking"

- Start Recording - For advanced use cases, this records a data set from the Structure Core sensor as a point cloud. More complete documentation is forthcoming.

- Get Status - Gets the current status of the Structure Core sensor and SLAM system

- Reset Sensor - In case of error, this reboots the Structure Core sensor Note that this is a firmware-level reboot, which may differ from a power-cycle

While creating a map or tracking, the key element to needed to verify that the system is working is the presence of pose. Misty Studio has a clear indicator that the robot does or does not have pose.

When Misty has pose, this indicator will be white. When Misty doesn't have pose, this will be red.

Note: This data is currently captured by subscribing to the SelfState message, though that message is slated for decomposition and deprecation. In future releases, expect that message to be replaced by smaller, more specific messages.

Manually Creating a Map

Manually creating a map through Misty Studio is a simple process, but it's important to check out the tips for success below to optimize your chances of having good results. To create a simple map, follow these steps:

- Place Misty in the environment which you'd like to map, ideally against a wall, facing into the room

- Click the "Start Mapping" button and wait for the pose indicator to change from red to white

- Using the driving controls, drive Misty forward by around 1 meter

- Using the driving controls, rotate Misty 360 degrees

- While mapping, the occupancy grid will render in the lower right portion of the page, refreshing every 5 seconds

- Click the "Stop Mapping" button

While this map is very simple, it demonstrates the process such that it could be done at a larger scale or automated.

Tips for Success

Robust indoor navigation is a pretty hard problem, and there are many edge cases that can cause issues. That said, there are a few things that you can do to have better results:

- Good, consistent lighting is key. The sensor likes brightly lit spaces, and likes if those spaces are consistently brightly lit.

- Glass walls and doors are a problem. Because the sensor is using reflected light to calculate depth in the scene, those reflections can result in huge errors in the depth measurements, which the sensor can't understand.

- Similarly, shiny floors will cause a loss of the ground plane. The robot will think that the floor is twice as deep as it is, and read it as a dropoff.

- Really open areas are tough. If the robot has several meters of empty space in any direction, there won't be any depth detected.

- Similarly, really constrained areas are problematic. Anything under 0.3 meters won't return reliable depth.

- When performing SLAM, what is being observed in the data is called the 'scene'. This scene works best when there's good visual detail. All of the computer vision algorithms are matching contours, corners, and shapes on the walls and in the furniture. Blank, empty spaces aren't good for SLAM.

- It should be expected that the robot often loses pose. In those cases, backtracking will generally regain pose.

- In some cases, the orientation of the robot's head can play a key role in keeping pose. Misty is pretty close to the ground, which means that she's seeing a LOT of ground plane. In some cases, either looking slightly up, or slightly down can help overcome pose loss when traversing an area.

- Similarly, anything that introduces a lot of oscillation to the robot can cause pose loss. Rough carpet that causes the head to bounce during driving is a big issue, as are fast, bouncy turns. In these cases, pose loss is hard to recover from.

- Mapping is extremenly processor intensive. As such, refrain from further taxing Misty while mapping. Tracking is still expensive, but significantly less than the initial map creation.

- Map generation is handled entirely in memory, which means that maps can only be of a finite size. Unfortunately, this doesn't always translate well to an area, though we tend to say that Misty can map about around 90 square meters. In reality, the composition of the scene has some impact on that total size.